To begin with, the word tomography can be explained with reference to ‘tomo’ and

‘graphy’; ‘tomo’ originates from the Greek word ‘tomos’ which means section or slice,

and ‘graphy’ refers to representation. Hence tomography refers to any method which

involves reconstruction of the internal structural information within an object mathematically from a series of projections.

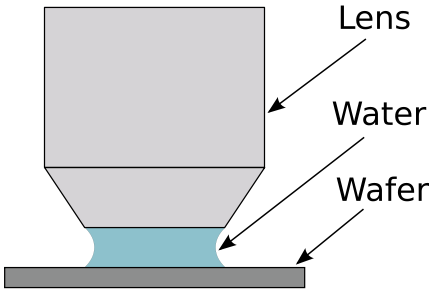

The projection here is the visual information probed using an emanation which are physical processes involved. These include physical processes such as radiation, wave motion, static field, electric current etc. which are used to study an object from outside.Medical tomography primarily uses X-ray absorption, magnetic resonance, positron emission, and sound waves (ultrasound) as the emanation.

Nonmedical area of application and research use ultrasound and many different frequencies of electromagnetic spectrum such as microwaves, gamma rays etc. for probing the visual information.

Besides photons, tomography is regularly performed using electrons and neutrons. In addition to absorption of the particles or radiation, tomography can be based on the scattering or emission of radiation or even using electric current as well.When electric current is consecutively fed through different available electrode pairs and the corresponding voltage, measured consecutively by all remaining electrode pairs, it is possible to create an image of the impedance of different regions of the volume conductor by using certain reconstruction algorithms. This imaging method is called impedance imaging.

admittance (admittivity) or specific impedance (impedivity) of tissue rather than the conductivity; hence, electric impedance tomography. Thus, EIT is an imaging method which maybe used to complement X-ray tomography (computer tomography, CT), ultrasound imaging, positron emission tomography (PET), and others.

admittance (admittivity) or specific impedance (impedivity) of tissue rather than the conductivity; hence, electric impedance tomography. Thus, EIT is an imaging method which maybe used to complement X-ray tomography (computer tomography, CT), ultrasound imaging, positron emission tomography (PET), and others.Because the image is usually constructed in two dimensions from a slice of the volume conductor, the method is also called impedance tomography and ECCT (electric current computed tomography), or simply, electrical impedance tomography or EIT.Electrical Impedance Tomography (EIT) is an imaging technology that applies time-varying currents to the surface of a body and records the resulting voltages in order to reconstruct and display the electrical conductivity and permittivity in the interior of the body. This technique exploits the electrical properties of tissues such as resistance and capacitance. It aims at exploiting the differences in the passive electrical properties of tissues in order to generate a tomographic image.Human tissue is not simply conductive. There is evidence that many tissues also demonstrate a capacitive component of current flow, and therefore, it is appropriate to speak of the specific impedance (impedivity) of tissue rather than the conductivity; hence, electric impedance tomography. Thus, EIT is an imaging method which maybe used to complement X-ray tomography (computer tomography, CT), ultrasound imaging, positron emission tomography (PET), and others.

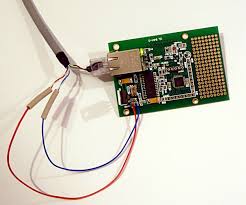

The image can be transmitted over the internet or local area network. The software architecture of system runs on Linux and has four threads the drawing thread, the paper tracking thread, sending thread and receiving thread.

The image can be transmitted over the internet or local area network. The software architecture of system runs on Linux and has four threads the drawing thread, the paper tracking thread, sending thread and receiving thread.